Which LLM is the best ?

🚀 VerifAI knows the Best Answer!

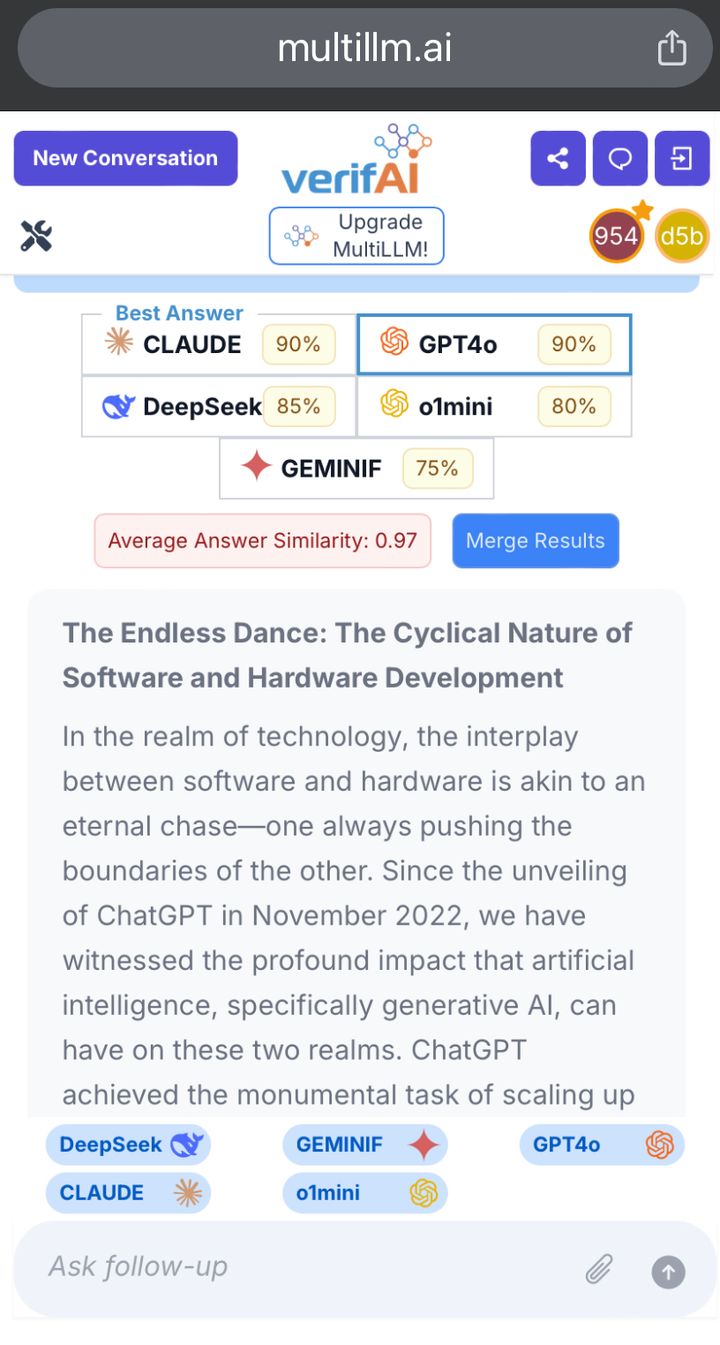

🚀 Why do we need this - because No single LLM is Best for all prompts

🚀 What’s more, LLMs continue to leapfrog each other in performance, so the Best LLM for each prompt does not remain the same

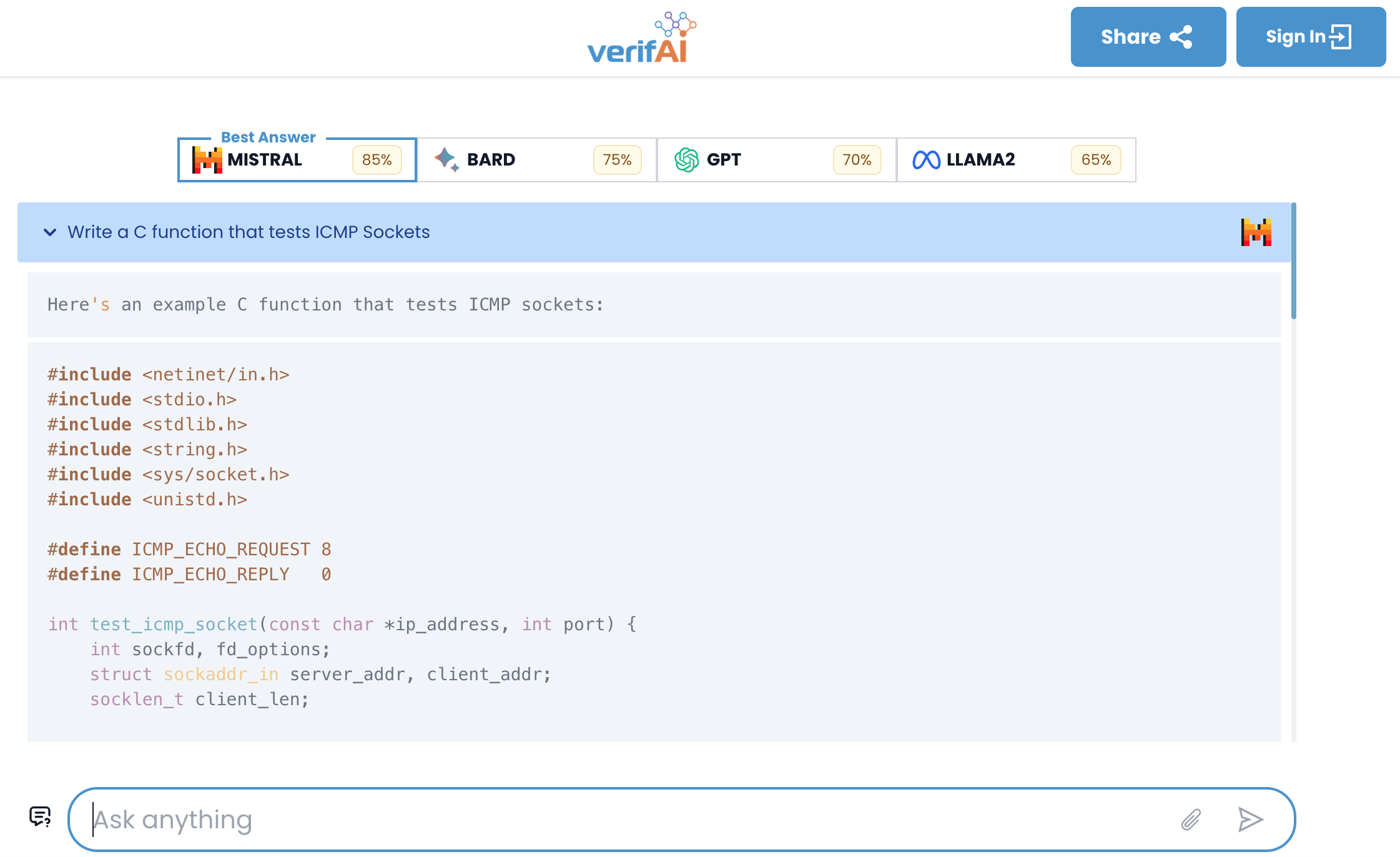

| Typical Prompts (Jan 2024) | Best Answer |

|---|---|

| Write verilog code for a GPU shader module | Mistral |

| Write a C function to test the ICMP protocol | Bard |

| Write a python function to find a root of the function f using Newton's method | GPT4 |

| How do you measure inflation? | LLAMA2 |

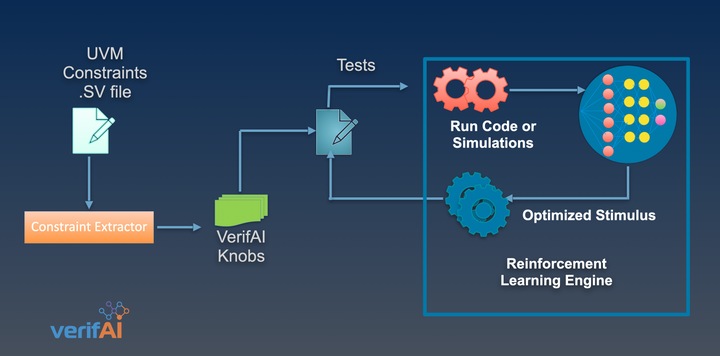

🚀 Our Domain Specific Reasoning LLM uses multiple weighted scoring parameters for text and code to find the Best LLM for each unique prompt.

Try it and see for yourself - multillm.ai